Chatbot Training Data Services Chatbot Training Data

Each poem is annotated whether or not it successfully communicates the idea of the metaphorical prompt. To analyze how these capabilities would mesh together in a natural conversation, and compare the performance of different architectures and training schemes. Building a chatbot with coding can be difficult for people without development experience, so it’s worth looking at sample code from experts as an entry point. New off-the-shelf datasets are being collected across all data types i.e. text, audio, image, & video. Log in

or

Sign Up

to review the conditions and access this dataset content. A set of Quora questions to determine whether pairs of question texts actually correspond to semantically equivalent queries.

- Contextual data allows your company to have a local approach on a global scale.

- There is a noticeable gap between existing dialog datasets and real-life human conversations.

- Users want on-demand information, like how chatbots deliver information.

- The development of these datasets were supported by the track sponsors and the Japanese Society of Artificial Intelligence (JSAI).

For example, if we are training a chatbot to assist with booking travel, we could fine-tune ChatGPT on a dataset of travel-related conversations. This would allow ChatGPT to generate responses that are more relevant and accurate for the task of booking travel. For a world-class conversational AI model, it needs to be fed with high-grade and relevant training datasets. Through its journey of over two decades, SunTec has accumulated unmatched expertise, experience and knowledge in gathering, categorising and processing large volumes of data.

Amazon Mechanical Turk in 2023: In-depth Evaluation

The number of unique unigrams in the model’s responses divided by the total number of generated tokens. This evaluation dataset contains a random subset of 200 prompts from the English OpenSubtitles 2009 dataset (Tiedemann 2009). I really recommend others to must join this platform and got a lot of advantages. The NPS Chat Corpus is part of the Natural Language Toolkit (NLTK) distribution. It includes both the complete NPS Chat Corpus in addition to numerous modules for running with the records.

- Obtaining appropriate data has always been an issue for many AI research companies.

- Reading the conversations of a chatbot with users will analyze the performance of your chatbot.

- To overcome these challenges, your AI-based chatbot must be trained on high-quality training data.

- This helps to sort out the customer requests without any human interference.

- Your chatbot won’t be aware of these utterances and will see the matching data as separate data points.

It is pertinent to understand certain generally accepted principles underlying a good dataset. You can support this repository by adding your dialogs in the current topics or your desired one and absolutely, in your own language. It is the point when you are done with it, make sure to add key entities to the variety of customer-related information you have shared with the Zendesk chatbot. When you are able to get the data, identify the intent of the user that will be using the product.

WhatsApp Opt-in Bot

When training AI chatbots, it is essential to continuously monitor and evaluate their performance. This can be done using various metrics, such as accuracy, precision, recall, and F1 score, which measure the chatbot’s ability to correctly identify user intents and provide appropriate responses. By tracking these metrics, developers can identify areas where the chatbot may be underperforming and make necessary adjustments to improve its performance. ChatEval offers evaluation datasets consisting of prompts that uploaded chatbots are to respond to.

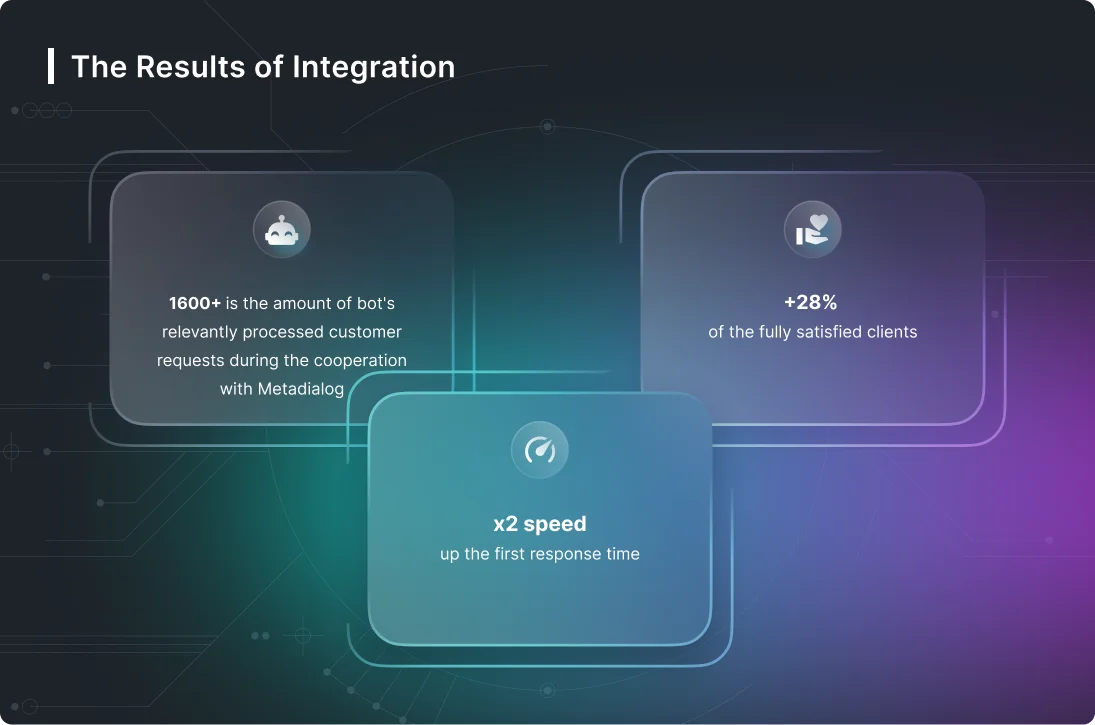

They need to show customers why they should be chosen over all the competition. Using chatbots can help make online customer service less tedious for employees. When Infobip was looking to prepare chatbots for their clients, they knew they needed a lot of data. For smaller projects, they had done data collection and annotation in-house, but with only one team member focused on data, it was a slow process. Infobip estimated that they need a large number of representative phrases per intent to make sure that the chatbot is properly trained on phrase variances. Each phrase would need to be unique enough to cover every potential phrase a customer might use.

Maximizing ROI: The Business Case For Chatbot-CRM Integration

The two main ones are context-based chatbots and keyword-based chatbots. Datasets are a fundamental resource for training machine learning models. They are also crucial for applying machine learning techniques to solve specific problems. These operations require a much more complete understanding of paragraph content than was required for previous data sets. Encouraging users to provide feedback on their interactions with the chatbot can help identify issues and areas for improvement.

Think You’ve Mastered Customer Interactions? Think Again – CMSWire

Think You’ve Mastered Customer Interactions? Think Again.

Posted: Tue, 10 Oct 2023 07:00:00 GMT [source]

At all points in the annotation process, our team ensures that no data breaches occur. If you have more than one paragraph in your dataset record you may wish to split it into multiple records. This is not always necessary, but it can help make your dataset more organized.

How to Fine Tune ChatGPT for Training Data

Read more about https://www.metadialog.com/ here.